Unpacking Data Center Facilities: A Comprehensive Guide

Why Data Center Facilities Power Our Digital World

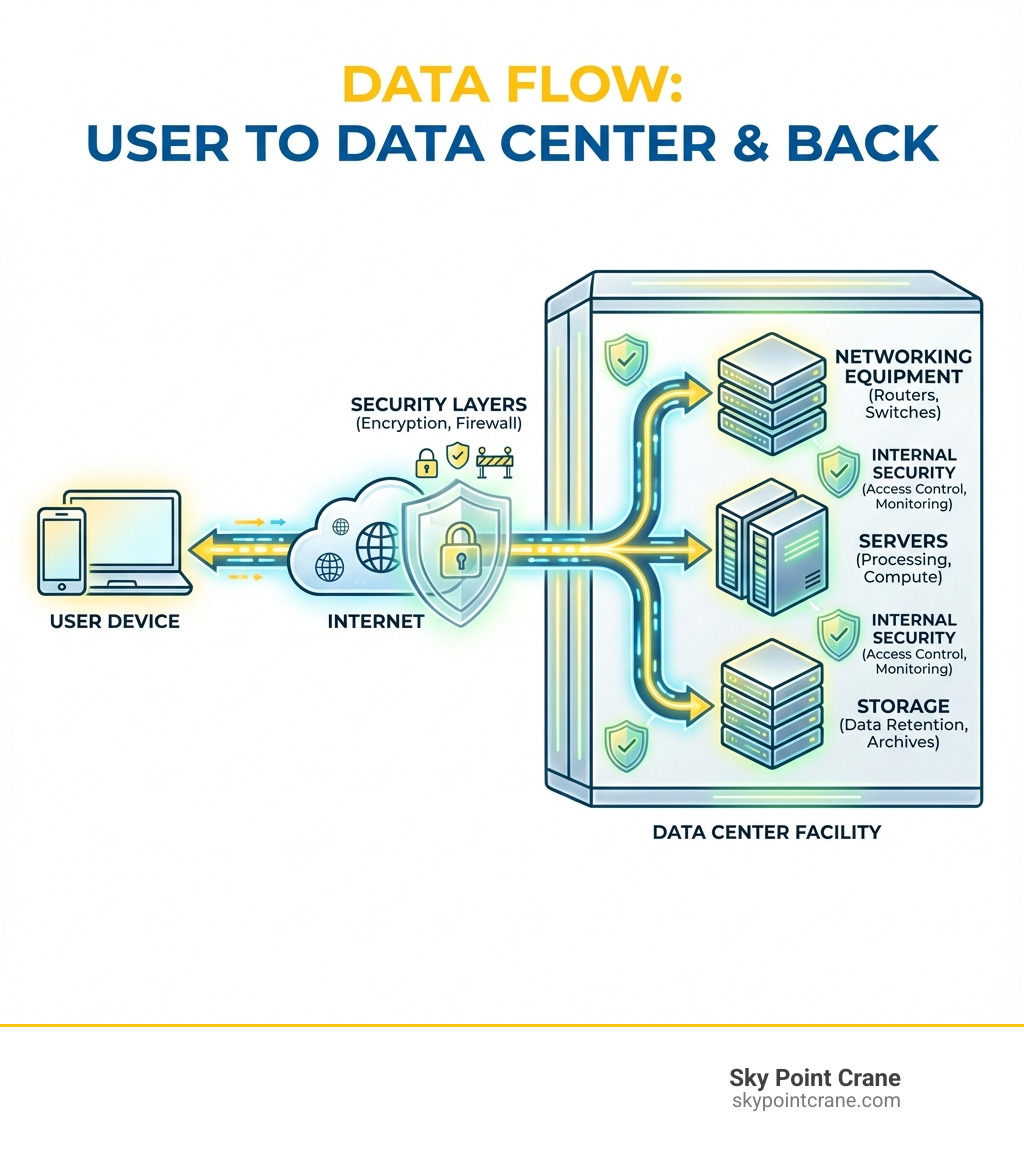

A data center facility is a specialized building housing the computing infrastructure, storage systems, and networking equipment that keep businesses, governments, and internet services running 24/7. These facilities are the physical backbone of our digital economy, powering everything from cloud storage and email to streaming services and artificial intelligence.

What defines a data center facility:

- Purpose: Centralized location for storing, processing, and distributing digital data

- Core Components: Servers, storage systems, networking equipment, power infrastructure, cooling systems, and security measures

- Key Features: Redundant power supplies, advanced cooling, physical security, and high-speed connectivity

- Types: Enterprise (owned by one company), colocation (shared space), cloud (public providers like AWS), and edge (localized for low latency)

- Uptime Standard: Most aim for 99.999% availability (less than 6 minutes of downtime per year)

The demand for data center facilities is exploding. Global data creation is projected to reach over 180 zettabytes by 2025, driving industry growth of 10% annually through 2030.

This guide breaks down everything about data center facilities—their evolution, core components, design standards, and the future challenges they face. Whether you’re planning construction for a new facility or simply curious about the buildings that power our connected world, you’ll find clear answers here.

I’m Dave Brocious, and through my work at Sky Point Crane, I’ve supported the construction of critical infrastructure projects, including the complex rigging and crane operations essential to data center facility construction. These projects require precision lifting of heavy equipment like UPS systems, generators, and cooling units—work that can’t afford mistakes when the facility must deliver 99.999% uptime.

Key Data center facility vocabulary:

The Evolution of Data Centers: From ENIAC to Hyperscale

The history of the data center facility begins in the 1940s with room-sized machines like the Electronic Numerical Integrator and Computer (ENIAC). These early “computer rooms” established the need for specialized environments to house critical computing systems.

The mainframe era continued this trend, but the 1990s microcomputer boom led to the rise of “servers” and dedicated “data centers” to house growing IT assets.

The dot-com boom of the late 1990s and early 2000s accelerated this evolution. Companies scrambled to establish an online presence, creating an insatiable demand for reliable internet infrastructure. This era saw the proliferation of “telco hotels” or “carrier hotels” to house server farms. As The New York Times reported in 2000, these facilities were the “engine room for the Internet,” cementing the term ‘data center’ in our lexicon.

The early 2000s brought another disruption: cloud computing. This moved computing resources to large, shared, virtualized environments. This led to the emergence of hyperscale facilities—vast, purpose-built data centers operated by tech giants like Amazon, Google, and Microsoft. These facilities can span over a million square feet, housing hundreds of thousands of servers to power the global cloud.

The data center industry is projected to continue its rapid expansion, growing at 10% a year through 2030, highlighting the critical role of the data center facility in our increasingly digital lives.

Core Components of a Modern Data Center Facility

Beneath its unassuming exterior, a modern data center facility is a complex ecosystem of technology and infrastructure working to keep our digital world running. It comprises IT equipment for computing, facility systems for environmental management, support infrastructure for power and connectivity, and operational staff for seamless functioning.

The Computing Core: Servers, Storage, and Networking

This is where the actual processing and storage of data happens.

- Servers: The workhorses of the data center, servers execute computational tasks, host applications, and run everything from email to complex AI.

- Enterprise Data Storage: Data centers use various storage systems, from hard disk drives (HDDs) for bulk storage to solid-state drives (SSDs) for high-speed access. Storage Area Networks (SANs) consolidate these resources, ensuring business-critical data is accessible and protected.

- Data Processing: Data centers are processing powerhouses, enabling demanding applications like big data analytics, artificial intelligence (AI), and machine learning (ML).

- Network Infrastructure: Sophisticated network infrastructure, including routers, switches, and fiber optic cabling, facilitates high-speed communication between servers, storage, and external networks.

- Connectivity: A data center’s value is tied to its connectivity, which ensures seamless access for users and applications, both internally and externally.

Power Infrastructure: Ensuring Constant Uptime

Power is the lifeblood of any data center facility. Ensuring a continuous, stable supply is paramount.

- Power Systems: Data centers require massive, reliable electricity delivery to thousands of servers and cooling units.

- Uninterruptible Power Supply (UPS): These battery backup systems provide immediate power during brief outages, giving generators time to start without disrupting operations.

- Backup Generators: For longer outages, massive diesel or natural gas generators take over. They are tested regularly to ensure they can carry the full facility load.

- Power Distribution Units (PDUs): These units distribute power to individual server racks and often include monitoring capabilities.

- Redundancy (N+1, 2N): Redundancy prevents single points of failure. N+1 design includes one extra component, while 2N provides two independent systems, ensuring continuous operation if one fails.

- Power as a Key Design Consideration: Power availability and cost are central to data center design, as electricity can exceed 10% of the total cost of ownership (TCO). Efficient power design and installation are critical aspects of data center facility construction.

Cooling and Airflow Management

All that computing generates immense heat. Effective cooling is crucial for performance and longevity. Learn more about Data Center Cooling.

- HVAC Systems: HVAC systems provide baseline environmental control.

- Hot Aisle/Cold Aisle Containment: This efficient strategy arranges server racks to separate cold air intake from hot air exhaust, with physical barriers preventing them from mixing.

- Computer Room Air Conditioners (CRAC): These specialized units circulate and cool the air within the data center.

- Liquid Cooling and Immersion Cooling: As server densities increase with AI workloads, traditional air cooling is often insufficient. Advanced methods like liquid cooling and immersion cooling are becoming more common. These can achieve very low Power Usage Effectiveness (PUE) values, as detailed in resources about Advanced cooling for AI workloads.

- Cooling Costs: Cooling can account for 35-45% of a data center’s TCO, making efficient design crucial for reducing operational expenses.

Security and Fire Suppression

Protecting valuable data and equipment from physical and environmental threats is non-negotiable.

- Physical Security Measures: A data center facility uses layered physical security, including perimeter fencing, guards, 24/7 surveillance, and strict access control with biometrics, keycards, and mantraps.

- Fire Suppression Systems: To combat the catastrophic threat of fire, facilities use advanced smoke detection and specialized fire suppression. Instead of water, they often use Gaseous fire suppression agents, like inert gases, which extinguish fires without damaging electronics.

- Cybersecurity Measures: Robust cybersecurity, including firewalls, intrusion detection systems, and encryption, is essential to protect data from digital threats.

Types of Data Centers: Finding the Right Fit

Just as businesses come in different shapes and sizes, so do data center facility options. Choosing the right type depends on factors like control, cost, scalability, and performance requirements.

| Type of Data Center | Cost | Control | Scalability | Use Case |

|---|---|---|---|---|

| Enterprise | High | High | Moderate | Dedicated resources for a single organization, sensitive data |

| Colocation | Medium | Medium | High | Outsourcing physical space, retaining IT management, hybrid cloud |

| Cloud | Low | Low | Very High | Flexible, on-demand resources, rapid deployment, global reach |

| Edge | Medium | Medium | Moderate | Low-latency applications, IoT, local data processing |

Enterprise Data Centers

These are traditional, privately owned facilities built and operated by a single organization for its exclusive use.

- Owned and Operated by a Single Organization: An enterprise data center provides complete control over infrastructure, security, and operations.

- On-premises: Typically located on the company’s property or in a dedicated building.

- High Control; High Capital Expenditure: This model offers maximum control but requires significant upfront capital and ongoing operational costs.

- For more in-depth information, explore More on Enterprise Data Centers.

Colocation Facilities

Colocation allows businesses to house their IT equipment in a third-party provider’s facility.

- Renting Space in a Third-Party Facility: Businesses lease space in a provider’s data center. The provider handles the building, power, cooling, and security, while the customer manages their own IT equipment.

- Shared Power and Cooling Infrastructure: This model allows businesses to leverage the provider’s robust and efficient infrastructure.

- Reduced Capital Cost; Multi-tenant: Colocation significantly reduces capital expenditure. We see a strong presence of these facilities throughout our service areas, including Pittsburgh, Western Pennsylvania, Central Pennsylvania, Ohio, West Virginia, and Maryland, offering local businesses flexible options.

Cloud and Hyperscale Data Centers

These are the massive facilities that power public cloud services.

- Operated by Public Cloud Providers: Companies like Amazon Web Services (AWS), Microsoft Azure, and Google Cloud operate vast networks of hyperscale data centers.

- Massive Scale; Virtualized Resources; Pay-as-you-go Model: Cloud data centers offer immense scalability with a pay-as-you-go model for virtualized resources. They use layered security and multi-Availability Zone (AZ) designs for high reliability. Explore how providers like AWS secure their data centers and define them at What is a Data Center?. General information is also available in Frequently asked questions.

- Supports Digital Change: Cloud data centers are crucial enablers of digital change, providing flexible infrastructure for rapid innovation.

Edge Data Centers

A newer, rapidly growing category, edge data centers bring computing closer to the data source.

- Smaller, Localized Facilities: Unlike massive hyperscale centers, edge data centers are smaller and distributed geographically.

- Proximity to End-users; Low Latency for IoT and 5G; Decentralized Computing: Their main advantage is low latency, achieved by processing data near its source. This is vital for applications like the Internet of Things (IoT), autonomous vehicles, and 5G networks. This decentralized approach helps manage the explosion of data generated outside traditional data centers.

Uptime, Reliability, and Industry Standards

In data center facility operations, uptime is the supreme metric. For businesses, downtime means lost revenue, damaged reputation, and frustrated customers.

- Uptime Measurement; The “Nines” of Availability: Uptime is expressed in “nines” of availability. “Five nines” (99.999%) equals less than 6 minutes of unplanned downtime per year, requiring exceptional design and operational discipline.

- Service Level Agreements (SLAs): Data center providers offer SLAs that guarantee a specific uptime level, defining performance expectations and penalties for failure.

- Fault Tolerance: A fault-tolerant data center facility can continue operating despite component failures by using redundant systems and automatic failover. The goal is to eliminate any single point of failure. Our work often involves helping to install the very systems that provide this crucial redundancy in a Mission Critical Facility.

Understanding Data Center Tiers

To standardize reliability, the Uptime Institute developed a widely recognized Tier Classification System. This helps businesses align infrastructure investments with availability needs. You can learn more directly from The Uptime Institute’s Tier Standard.

- Tier I (Basic Capacity): Offers basic capacity with a single path for power and cooling and no redundancy. It’s susceptible to disruptions. Uptime: 99.671%.

- Tier II (Redundant Components): Includes redundant power and cooling components but still has a single distribution path, allowing for some maintenance. Uptime: 99.741%.

- Tier III (Concurrently Maintainable): A Tier III data center facility is concurrently maintainable, with multiple power and cooling paths. Any component can be maintained or replaced without affecting IT operations. Uptime: 99.982%.

- Tier IV (Fault Tolerant): The highest tier, offering fault tolerance with multiple independent and physically isolated systems. A single equipment failure or path interruption will not affect IT operations. Uptime: 99.995%.

Another important standard is TIA-942, which provides guidelines for the design and construction of data centers.

The Role of Operational Staff in a data center facility

While technology is vital, the human element is indispensable for a seamlessly functioning data center facility.

- Data Center Technicians, Facilities Engineers, Network Operations, Security Personnel: A skilled team works 24/7, including technicians for hardware, engineers for power and cooling, network specialists for connectivity, and security personnel.

- 24/7 Monitoring and Maintenance: Teams perform continuous monitoring, preventative maintenance, and immediate response to alerts, preventing minor issues from becoming major outages.

- The Human Element of Reliability: Despite automation, the expertise of operational staff is critical for troubleshooting, implementing upgrades, and enforcing protocols to maintain high reliability.

Future Trends and Challenges for the Data Center Facility

The data center landscape is constantly evolving, driven by digital demand and environmental awareness. The future data center facility must handle immense computational loads while minimizing its ecological footprint.

The Impact of AI and High-Density Computing

Artificial intelligence and machine learning are fundamentally reshaping data center requirements.

- Increased Power Requirements: AI workloads demand exponentially more power. U.S. data center demand is expected to double to 35 gigawatts (GW) by 2030, up from 17 GW in 2022, largely driven by AI.

- Higher Rack Densities: While traditional racks use a few kilowatts of power, AI-optimized racks can draw tens or even hundreds, creating unprecedented heat densities.

- Need for Advanced Liquid Cooling Solutions: As heat densities soar, conventional air cooling is insufficient. Liquid and immersion cooling are becoming necessary for AI, requiring new infrastructure and specialized installation.

Sustainability and Environmental Concerns

The digital age comes with a significant environmental cost, a pressing challenge for the industry.

- Energy Consumption: Data centers are energy-intensive. Global consumption was around 415 terawatt hours (TWh) in 2024 (1.5% of global demand) and could double by 2030, as detailed in the Data Centres and Data Transmission Networks report.

- Power Usage Effectiveness (PUE): PUE measures energy efficiency (1.0 is ideal). While the average PUE is high, state-of-the-art facilities achieve PUEs close to 1.1. Improving PUE is crucial for reducing energy waste.

- Water Usage: Cooling systems are water-intensive. A 100-megawatt facility can use 2 million liters of water daily. The global footprint is 560 billion liters annually and may double by 2030. This is a concern as many new data centers are in water-stressed areas.

- Greenhouse Gas Emissions: Energy use creates significant carbon emissions, projected to rise from 220 million tonnes in 2024 to over 300 million tonnes by 2035.

- E-waste Management: Rapid IT refresh cycles create substantial e-waste (62 million metric tons globally in 2022). Generative AI could add up to 5 million metric tons annually by 2030. With only 22% of e-waste recycled, better lifecycle management is critical.

The Rise of Modular and Flexible Design

Modular designs are gaining traction for rapid deployment, scalability, and adaptability.

- Prefabricated Modules: Modular data centers use pre-engineered, standardized modules assembled on-site, reducing construction time and cost.

- Scalability and Faster Deployment: This “plug-and-play” approach allows businesses to scale by adding modules as needed, enabling faster deployment.

- Crane Use for Building Data Centers: This modular approach relies on specialized heavy lifting. The precise placement of large, prefabricated modules—IT containers, power units, or cooling skids—is where our expertise is critical for keeping complex construction projects on schedule.

- Flexibility for Future Tech: Modular designs are more adaptable to future technological changes, allowing for easier upgrades.

- Community and Social Impacts: Large-scale data centers can challenge local communities with noise pollution, strained power grids, and resource competition. These social implications, detailed in academic research like this study on data center geographies, are leading to growing community resistance.

Conclusion

The data center facility is the unsung hero of the digital age, a complex powerhouse underpinning our connected lives. It has evolved from its 1940s origins to today’s hyperscale and edge facilities, adapting to meet the insatiable demand for data.

We’ve explored its core components: computing, power, cooling, and security. We’ve also covered the different types—enterprise, colocation, cloud, and edge—and their focus on uptime and reliability through standards like the Uptime Institute’s Tiers.

Looking ahead, the industry faces significant challenges and opportunities. The AI explosion demands more power and advanced cooling, while sustainability concerns push for greener practices. Modular designs offer a path to faster, more adaptable deployment.

Building these facilities requires specialized construction and logistics expertise. The precision rigging of massive power units, generators, and modular components is a monumental task.

At Sky Point Crane, we understand these challenges intimately. Our comprehensive crane services, 3D lift planning, and NCCCO certified operators are designed to support the complex construction projects that bring these vital facilities to life across Western and Central Pennsylvania, Ohio, West Virginia, and Maryland. We pride ourselves on ensuring safety and efficiency, enabling our clients to build the robust infrastructure that powers our digital future.

The demand for digital services will continue to grow, driving the need for advanced, sustainable data centers. The journey is far from over, and we’re excited to be a part of it.